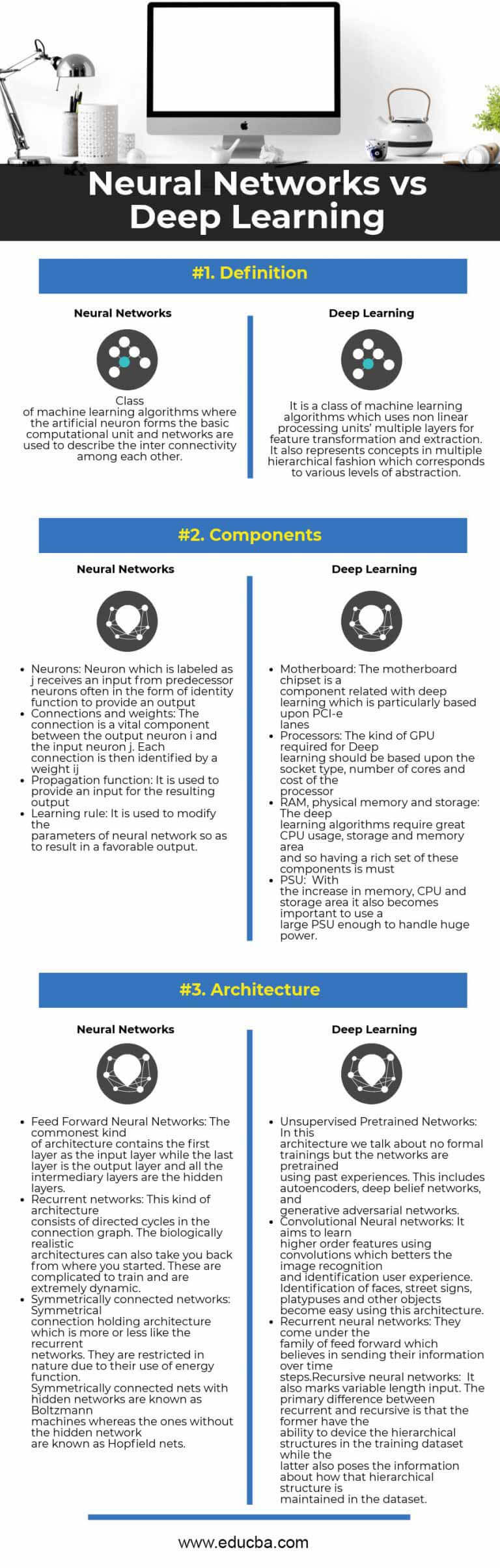

According to the resulting splitting value (3000 for income in figure 3), the training data is subdivided. Using all training data objects, at first the most important feature is identified by comparing all of the features using a statistical measure. The learning process creates the tree step by step according to the importance of the input features in the context of the specific application. Similar to Neural Networks, the tree is built via a learning process using training data. In figure 3 for example the process walks to the left, if the person has an income lower than 3000. At each node, the path to be followed depends on the value of the feature for the specific input object. a person who applies for a credit, the decision process starts from the root node and walks through the tree until a leaf is reached which contains the result. To obtain a result for a specific input object, e.g. Each node in the tree represents a feature from the input space, each branch a decision and each leaf at the end of a branch the corresponding output value (see figure 3).įigure 3: Architecture of a Decision Tree A Decision Tree represents a classification or regression model in a tree structure. Random Forests belong to the family of decision tree algorithms. Recurrent Neural Networks are designed to recognize patterns in sequences of data and Long Short-Term Memory Networks as a further development to learn long-term dependencies. Specifically for image processing, Convolutional Neural Networks were developed. Over time different variants of Neural Networks have been developed for specific application areas. Deep Networks have thousands to a few million neurons and millions of connections. The more layers the more complex the representation of an application area can be.

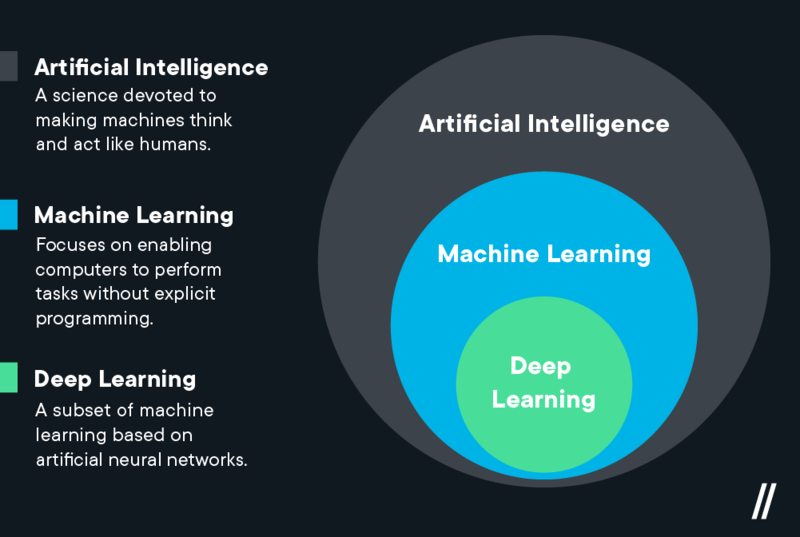

With this very general paradigm we can build nearly anything: Image classification systems, speech recognition engines, trading systems, fraud detection systems, and so on.Ī Neural Network can be made deeper by increasing the number of hidden layers. Thus, a Neural Network is a chain of trainable, numerical transformations that are applied to a set of input data and yield certain output data. Learning algorithms adjust the connection weights between the neurons according to minimize the (squared) differences between the real values of the target variables and those calculated by the network. After setting the architecture of a Neural Network (number of layers, number of neurons per layer and transformation function f() per neuron) the network is trained by searching for the weights that produce the desired output. The sum is then transformed with a function f() and passed on to the nodes of the next layer or output as a result. These are multiplied by weights w i and summed up. The node receives the outputs x i of the previous nodes to which it is connected. If we zoom into a hidden or output node, we see what is shown in figure 2. The basic architecture of a so-called multi-layer perceptron is shown in Figure 1. These units, also called neurons, are usually organized into several layers that have specific roles. The idea is to combine simple units to solve complex problems. Neural Networks represent a universal calculation mechanism based on pattern recognition.

impulsive, discount, loyal), the target for regression problems is of numerical type, like an S&P500 forecast or a prediction of the quantity of sales. While classification is used when the target to classify is of categorical type, like creditworthy (yes/no) or customer type (e.g. Both can be used for classification and regression purposes. Let’s begin with a short description of both approaches. How do Neural Networks and Random Forests work?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed